- Solutions

Our solutions

Digital solutions combining strategy, technology, automation and people.

Technology advisory

Navigate the fast-changing world

Cloud engineering

Transformational change at scale and speed

Data solutions

Realise the untapped potential of data

AI and machine learning

Leverage your data assets

Application engineering

Optimise and grow your digital investment

Maintenance and support

End-to-end application management

Business process solutions

Manage business processes to reduce operating costs

Quality solutions

Independent testing for your systems and software

Digital experience platforms

Redesign your digital assets for the optimal customer experience

- Industries

Industries

We provide solutions tailored to your sector to assist you in identifying opportunities, realising value and opening up new markets.

Banking

Delivering next-gen banking solutions that drive growth

Healthcare

Patient empowerment, lifesciences, and pharma

Retail

Functional and emotional customer experiences online and in-store

Travel

Airlines, online travel giants, niche disruptors

Media and publishing

Content consumption for the tech-driven audience

Hi-tech and IOT

Real-time information and operational agility and flexibility to respond to market changes

Logistics and supply chain

Reimagine a supply chain that is more flexible and resilient to change

Education

Create an exciting and engaging digital experience for students and departments

Insurance

Streamline operations, expedite claims, and unlock new possibilities

- Our thinking

Our thinking

The latest updates to help future-focused organisations on the issues that matter most in business.

News

Keep up to date with company news and announcements at NashTech

Insights

The latest expertise and thought leadership from the NashTech and our clients

Resources

Expert guidance on everything from complex technological issues to current trends

Digital Leadership Report

Explore insights from the latest world's largest and longest-running study on technology leadership

- Case studies

- About us

About us

Find out what makes us who we are

Leadership

The diverse leadership team at NashTech

Nash Squared

A global professional services organisation with three key areas of focus

Vietnam 360°

Experience a 360 degree all-encompassing virtual tour of NashTech’s Vietnam offices

ESG

Discover our environmental, social and governance commitments

Diversity, equality and inclusion

Making diversity, equality and inclusion an integral part of our culture

Our locations

Discover our network of global offices, centres of excellence and innovation

- English

Is machine learning that complex to understand?

Nowadays, while surfing the Internet, you can bump into many academic definitions of machine learning (ML). Take one typical explanation from Stanford as an example.

“Machine learning is the science of getting computers to act without being explicitly programmed”.

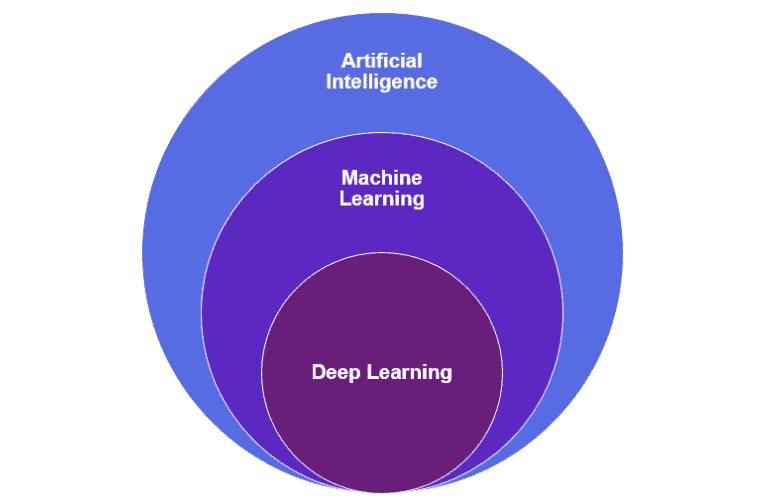

In detail, it is the science to get computers to learn and improve their learning over time based on the data and information that we humans provide to them. While the ultimate goal of AI is to recreate or imitate human intelligence artificially, machine learning was innovated to create predictive models around specific tasks. It is a subset of AI technologies, in which computers are given the ability to learn without being programmed explicitly. Within the field of machine learning, the term deep learning is also frequently mentioned. This is a subset of ML, in which multi layered neural networks learn and adapt from vast amount of data.

But, in fact, ignore those academic definitions… machine learning is immersed in our daily lives and has become so pervasive that you encounter the application of it every day without even noticing. Doubt it? Let us give you some examples.

Have you ever wondered how Siri on your iPhone recognises your voice the moment you say “Hey Siri!”? Have you ever been curious about how Spotify can easily suggest songs that are perfectly matched with your preference? Hear enough of self-driving cars? All of these are typical applications of machine learning. Sounding more familiar to you?

Let’s take a closer look at Spotify’s suggested playlist mechanism. Say Spotify wanted to predict what new songs a particular user would enjoy. At first, before making a prediction, the music streaming platform probably needs to start with studying the users’ existing library. A study about the users’ listened to, repeated and ‘liked’ songs needs to be conducted. The machine will then analyse the traits of the songs, such as acoustic elements, genre, tempo, etc. This is where machine learning is applied since such analysis can be done with neural networks, the networks that are usually used and deployed in AI and machine learning field. Based on the analysis, the machine will then make a prediction about the songs that are likely to match the users’ preference. As users listen to, and like or dislike the suggested tracks, this new data can be consolidated and input into the same models to update and improve the prediction list.

Where’s the value of machine learning?

Patterns are identified and improved over time

One of the key unique points of machine learning is its ability to review large volumes of data quickly and accurately. Machines will learn from the data and identify patterns and trends within it, in a short period of time, and then will give predictions. Over time, with the nature of ever-changing data volume and constantly update, more experience is given to the algorithm so that they can make better predictions with higher accuracy.

This, however, is not just a one-man show of data. The beauty of machine learning comes from two essential elements: data set and algorithm. Without a good dataset, no matter how good and enormous the algorithm is, the outcome wouldn’t be the most optimal. Vice versa, even with the fascinating dataset, without the deployment of the appropriate algorithm and its nature, outcome will be affected as well.

A typical example for this is weather forecast models where the forecast is made based on the weather patterns and events that occurred in the past. The larger and more detailed the data set is, with the magic of the appropriate algorithm, the higher rate of accuracy that predictions will achieve.

Adaptability with minimal human intervention

Immediate adaptation with minimal human intervention is one of the primary advantages of machine learning. This can be seen in antivirus programs. Machine learning is used in convergence with AI to implement analysis and respond to new threats. While machine learning is used to detect potential threats, AI is applied to deploy the appropriate reactions to those threats. This is also what Microsoft’s Windows Defender is doing by employing multiple layers of machine learning to identify and block perceived threats to bring a safe and secured browsing experience for users.

Also, the automated nature of machine learning helps reduce time and cost, as it’s freeing up employees from performing manual tasks. Human intervention only comes when the need to validate and monitor the output from the machine is required to make sure it’s correct and applicable.

Can be applied in the broad spectrum of sectors

Like any other technologies, machine learning isn’t the perfect match for every company in every sector since it needs to be aligned with business needs and objectives. However, with tremendous benefits from machine learning and related technologies such as AI or predictive analytics, it can be applied across a myriad of sectors.

For instance, a hospital in the USA has collaborated with a non-profit technology and analytics company to develop a machine learning driven model to predict individual’s COVID-19 exposure risk. Predictions are made based on population density and their proximity to positive cases. Later, after validating and monitoring the given results from the machine, the health institutes will deploy appropriate support for each case accordingly.

Machine learning and artificial intelligence at NashTech

In the age of industry 4.0, the world is changing rapidly which demands us to constantly update and adapt ourselves to keep up with the pace. As such, building data driven and AI-enabled applications for our customers is one of the determined and continuous goals on our pathway. That’s why, since 2018, NashTech has heavily invested in resources to research AI/ML from the foundational approach by working with AI COTS products to the more advanced and deep level.

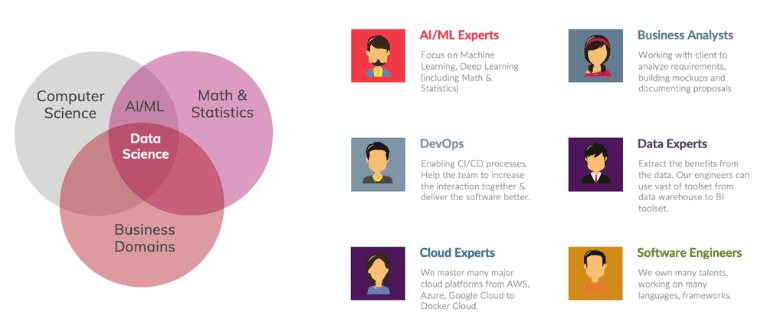

With the effort in exploring machine learning and AI, NashTech has conducted a wide range of activities including: attaining knowledge in math and statistics, researching machine learning and deep learning algorithms (supervise, unsupervised & reinforcement learning), building proof of concepts (PoCs), training our people to form an exclusively dedicated AI team and working closely with our customers to discover potential new values by adopting AI.

To turn an AI application to be usable, the whole process requires huge effort from the designated AI/ML team, which comprises of:

- Business analysts: it starts from our business analysts who usually asks interesting questions to discover new insights and business challenges

- Data engineers: gather the right dataset or do Extract-Transform-Load (ETL) to standardise the data source

- AI/ML engineers: experiment building AI models

- DevOps & cloud experts: bring the whole system to the staging / production environment

This whole complicated pipeline needs to be practiced in a fast pace to make sure we can achieve goals, deliver in small sprint iterations and keep improving after every release.

Fortunately, at NashTech, we excel in applying agile practice in projects that we conduct for global clients. Thus, we’re very confident in building that DevOps culture for AI and machine learning.

NashTech’s case studies

At NashTech, we are currently in the phase of applying machine learning into several projects deployed for international clients across sectors.

Education/EdTech

With the increasing demand in taking English exams, such as IELTS or PTE, and how to meet the demand of assessing and scoring is one of the key questions that education institutes need to solve. Currently the industry is lacking human resources and the cost of examiners marking papers is high.

NashTech has built a solution to solve this problem for a client who is one of the leading education technology (EdTech) pioneers. Like any other machine learning application, there are two main phases: establishing a dataset that the machines can learn from and applying algorithms to make predictions.

- Dataset preparation: we collected and consolidated information on past exams, results, benchmarks, or other historical data from seven countries throughout the years. The dataset was split out: 80% for training & 20% for testing

- Apply algorithms: We’ve applied deep learning algorithms such as long short-term memory (LSTM), an artificial recurrent neural network (RNN) architecture for classifying, processing and making predictions, to assess the exam papers

The output of the trained model is promising with the accuracy coming in at over 90%. If we apply ±1 tolerance, the model can correctly score more than 95% on a score band from 0 to 6.

Also, in the digital era, online exams are becoming more popular. To assure that the test is undertaken honestly, and to avoid fraud, machine learning is also applied to carry out surveillance via cameras. If the machine detects that the candidate does not match the registered identity, a warning will be sent to the back office and the results will be dismissed.

Supporting camera surveillance, NashTech is also developing POCs for remote test centres which is related to keyboard patterns detection. This means that machines will identify the typing pattern to see if it matches with what they learned from the behavior provided by the candidate previously.

Smart album

Imagine you’re a photographer, taking thousands of pictures a month and you have a need to filter the pictures by topics, places, persons, ages, gender or simple objects such as trees, furniture etc. Unfortunately, this takes a lot of time manually, what if a computer, backed by AI/ML, can offer a quicker and more efficient solution?

Smart photo management is another example where NashTech is applying machine learning and deep learning. Machines are trained and taught to “understand” the photos so that they can support images searches. A PoC has been launched with several functions such as:

- Counting people appearing in the photos

- Identifying age, gender and emotion of those people. If people are already encoded, the machine can recognise them in the photos

- Identifying if there are other objects (furniture, trees, plants, etc.)

- Support searching of up to 80 object classes and support end users to train more classes

In order to bring the best experience to the end users, which results from the blend of data set and algorithm, NashTech is continuously seeking for enhancements in our PoCs. In order to achieve that, once the PoC is put into live, we look forward to collecting end users’ feedback. This feedback will then be combined with our validation of the machine’s output and used to re-train the machine so that it will produce better outcomes in the future.

NashTech AI platform

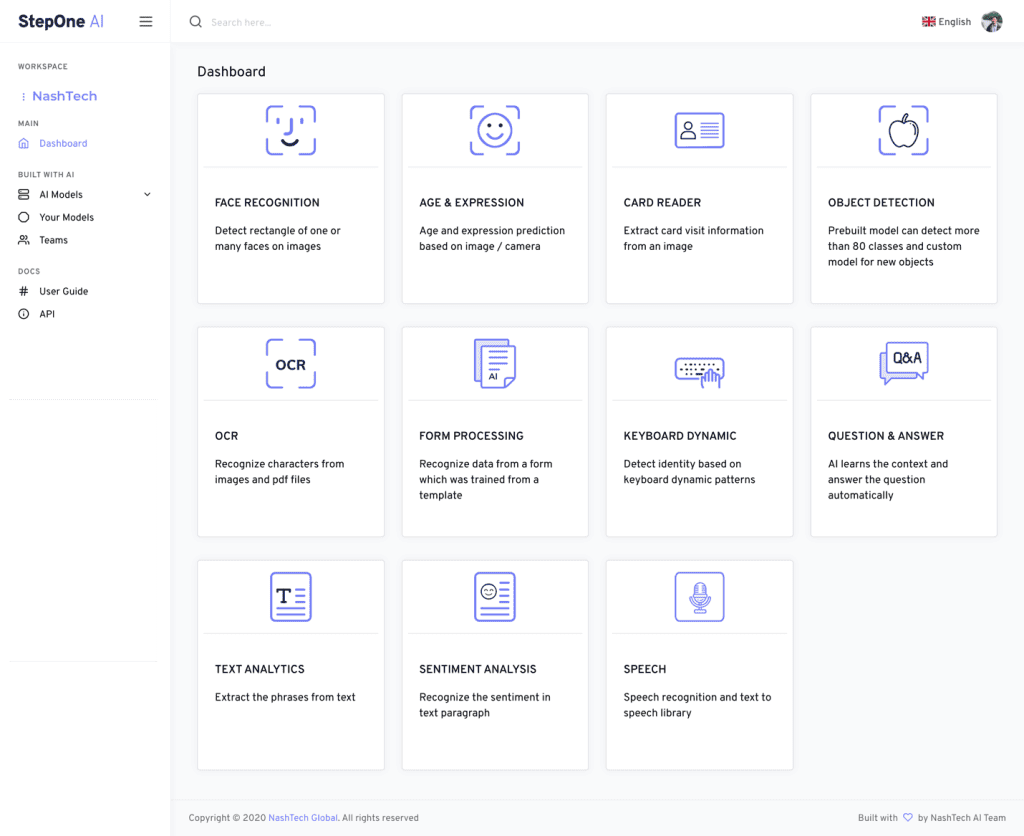

To accelerate the process building AI applications, NashTech has also developed our own AI platform where not only machine learning but also data science and artificial intelligence (AI) is applied convergently. Solutions that require popular AI modules such as computer vision (including face detection & recognition, age & expression prediction, OCR), keyboard bindings prediction to form extraction, sentiment analysis, text analytics to name a few can take advantage of NashTech’s accelerator. The AI libraries are also built on top of modern architecture & infrastructure such as Kubernetes, microservices which targets to deliver AI applications ready for production environment.

Some of the highlighted functions of these AI libraries include:

- Face detection, face recognition

- Question & answer (can be applied to chatbot applications)

- ID card prediction: extract personal information from ID card (in images or camera) which can be applied in digital KYC process

- Form processing: extract information from insurance claim form, bill of lading form, etc.

- Optical character recognition (OCR): enables users to convert documents ranging from scanned paper documents, scanned PDF files to digital images into editable and searchable data such as text

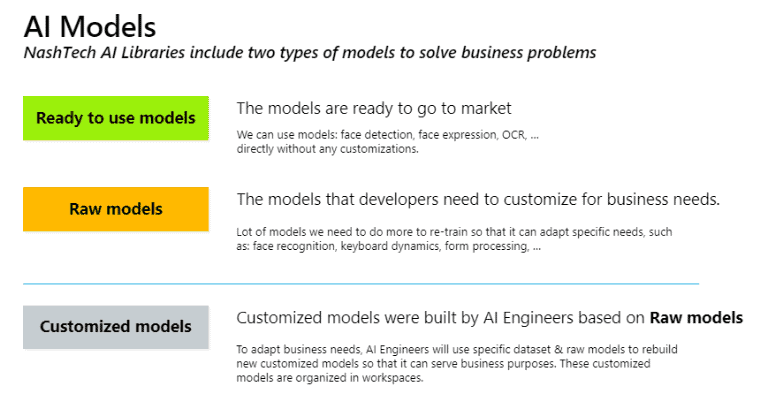

While most of NashTech’s AI libraries are ready to use, it’s not always solving all problems from our client’s requirements. We can also build specific customised AI models which depends on our client’s own dataset, build & run the experiments on our client’s infrastructure (to ensure data privacy) and finally deploy them as a whole AI solution.

NashTech also integrates its AI platform into other emerging technologies such as Robotics Process Automation (RPA) to maximise smart automation.

Although the question “Are we nearly there yet?” for artificial intelligence as well as machine learning is not yet fully resolved, we can easily foresee that the future where human and machine working in convergence has commenced and comes into clear vision day by day.

In the near future, NashTech will continuously invest in and put effort into exploring advanced technologies to exploit our full potential and capability as well as enhancing customer experience.

With years of experience in delivering technology excellence, NashTech offers support to customers exploring emerging technologies and applying them to their digital transformation journey.

For more information, email info@nashtechglobal.com and a member of the team will be in touch. We would be delighted to talk to you about how leveraging machine learning and artificial intelligence adds real business value and may be able to help transform your business.

Suggested articles

The art of human-AI collaboration: A case study in model improvement

For over 16 years, NashTech has been a trusted partner, providing data management solutions that have fuelled the exponential growth of our client’s online shopping platform. The approach has...

From rising above adversity to riding the wave of digital transformation in the education sector

Explore how NashTech help Trinity College London ride the wave of digital transformation in the education sector

Migrating and modernising the virtual learning environment to AWS for an enhanced experience

The migrated and modernised Moodle infrastructure means that The Open University can now take advantage of cloud benefits.

We help you understand your technology journey, navigate the complex world of data, digitise business process or provide a seamless user experience

- Topics: